Case Files

FindFace CIBR allows for conducting case management and performing investigation by analyzing associated video footage and photo files. This functionality is available on the Case Files tab.

In this section:

Video File Formats

Video footage used for case investigations is accepted in a wide variety of formats. Click here for listing.

Case Investigation Workflow

Case investigation is conducted in the following way:

Create a new case file. Specify the incident date and name, the case ID in a registry, and the incident date.

Specify case details and set up access permissions for it.

Upload a video footage and photo files from the criminal scene. Set up video processing parameters if needed. Process the video. The system will return human faces detected in it.

Parse the detected faces. Figure out which individual is likely to be a suspect, a victim, or a witness. Other individuals will be considered not relevant to the case. Link faces to matching records in the global record index and participants of other cases.

Fill in case participant records. Specify their names and attach related documents.

Keep returning to the case file to supplement it with new materials, as the investigation progresses.

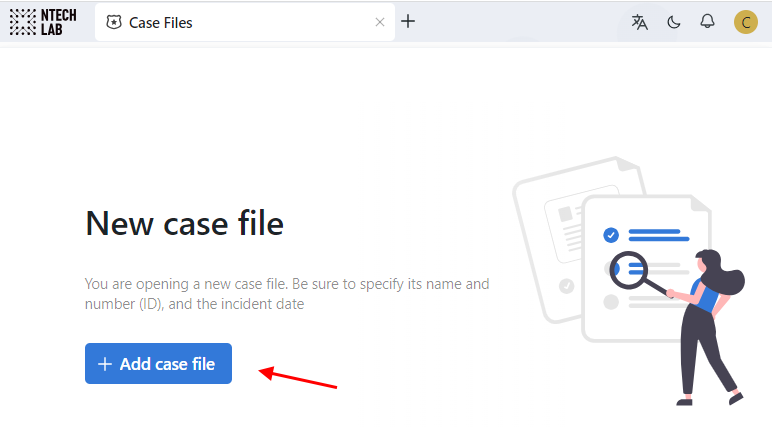

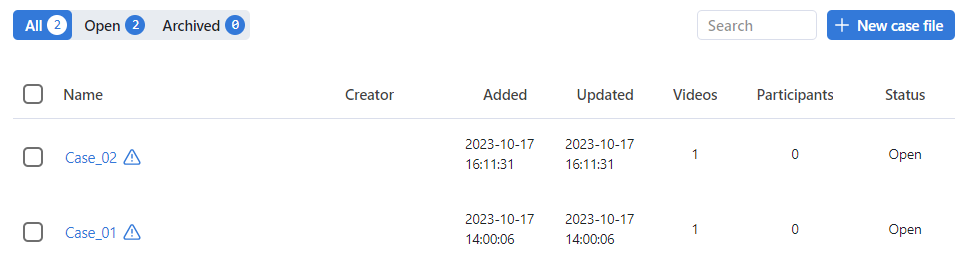

Create Case File

To create a case file, do the following:

Navigate to Case Files.

Click + Add case file.

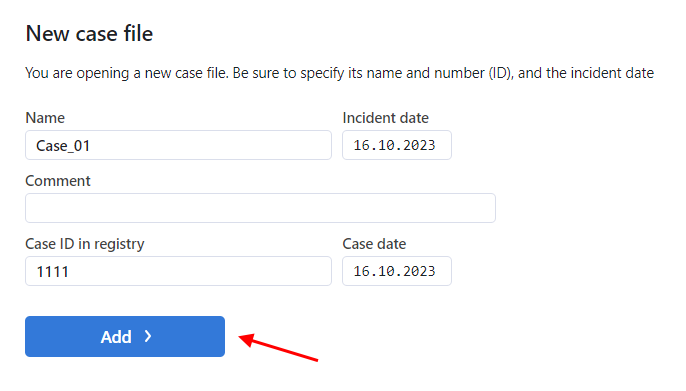

Enter the descriptive name of the case. Specify the incident date.

Add a comment, if needed.

Specify the case ID and date in the registry, if needed.

Click Add >. As a result, the case file will be added to the case list.

Upload and Process Video File

To upload and process a CSI video footage, do the following:

Navigate to the Case files tab.

Click the case on the case list to open it.

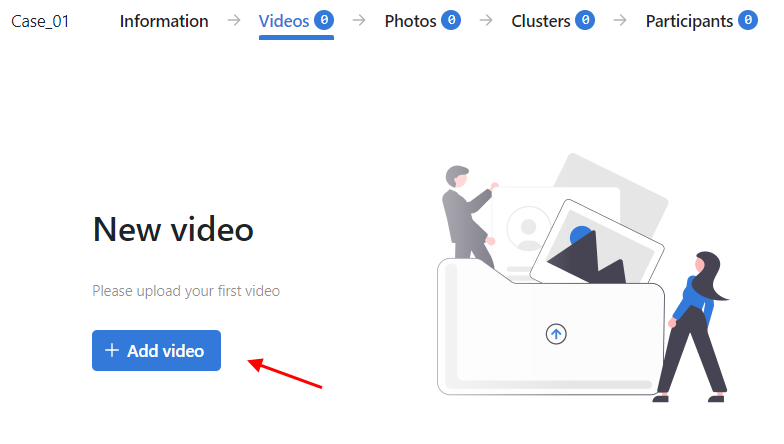

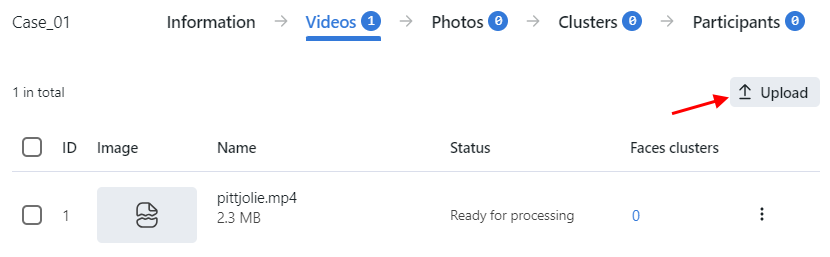

Navigate to the Videos tab.

Click the + Add video button.

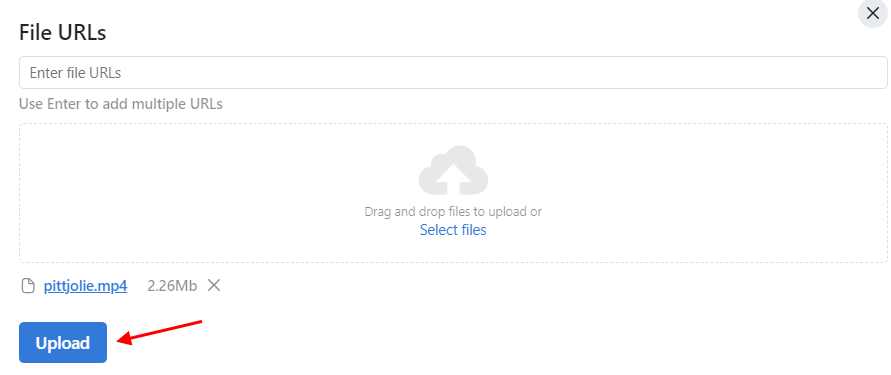

Specify a URL or select a file. Click Upload.

The video file will be added to the case video uploads. Click Upload to upload another video file.

Click the video on the list to open the processing configuration wizard. Set up the video processing parameters.

Video processing parameters

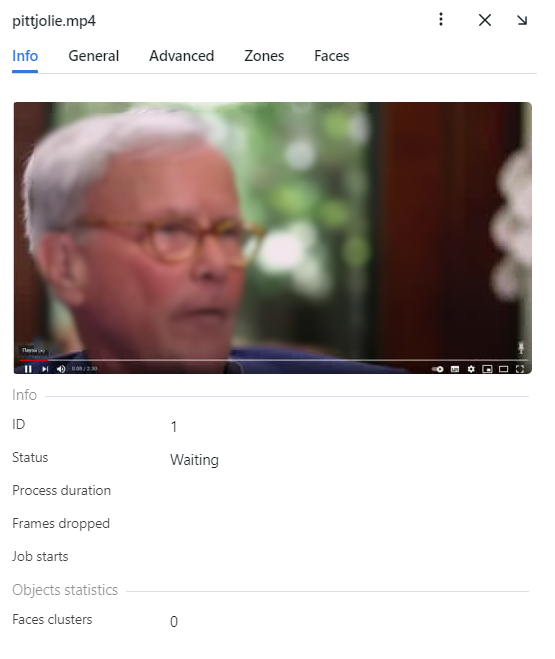

For each video, you will be provided with complete statistics such as current session duration, the number of objects processed with errors after the last job restart, the number of frame drops, and other data. To consult these data, click the name of the video on the list and go to the Info tab.

On the General tab, you can change name if needed.

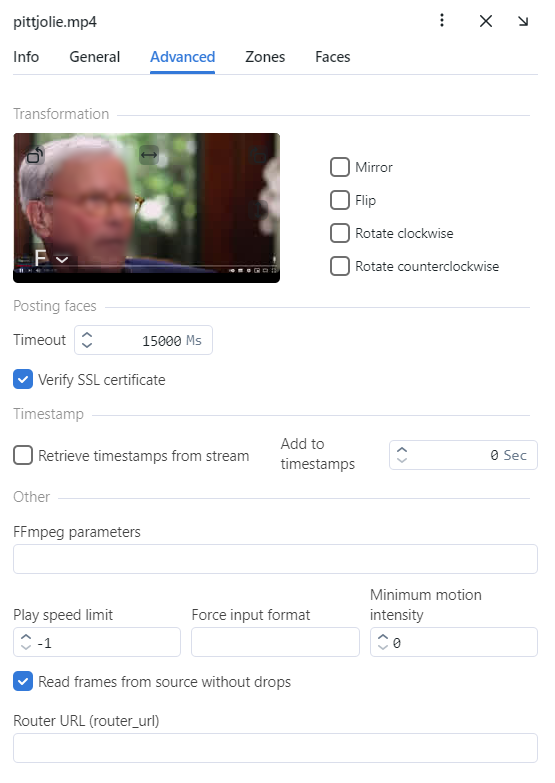

On the Advanced tab, fine-tune the video:

If needed, change the video orientation.

Important

Be aware that the

findface-multi-legacyserver rotates the video using post-processing tools. It can negatively affect performance. Rotate the video via the camera functionality wherever possible.Timeout: Specify the timeout in milliseconds for posting detected objects.

Verify SSL certificate: Select to enable verification of the server SSL certificate when the object tracker posts objects to the server over https. Deselect the option if you use a self-signed certificate.

Retrieve timestamps from stream: Select to retrieve and post timestamps from the video stream. Deselect the option to post the current date and time.

Add to timestamps: Add the specified number of seconds to timestamps from the stream.

FFMPEG parameters: FFMPEG options for the video stream in the key-value format, for example, [“rtsp_transpotr=tcp”, “ss=00:20:00”].

Play speed limit: If less than zero, the speed is not limited. In other cases, the stream is read with the given

play_speed. Not applicable for live-streaming.Force input format: Pass FFMPEG format (mxg, flv, etc.) if it cannot be detected automatically.

Minimum motion intensity: Minimum motion intensity to be detected by the motion detector.

Read frames from source without drops: If

findface-video-workerdoes not have enough resources to process all frames with objects, it drops some of them. If this option is active (true)findface-video-workerputs excessive frames on a waiting list to process them later.Router URL (router_url): IP address for posting detected objects to external video workers from

findface-video-worker. By default,'http://127.0.0.1'.

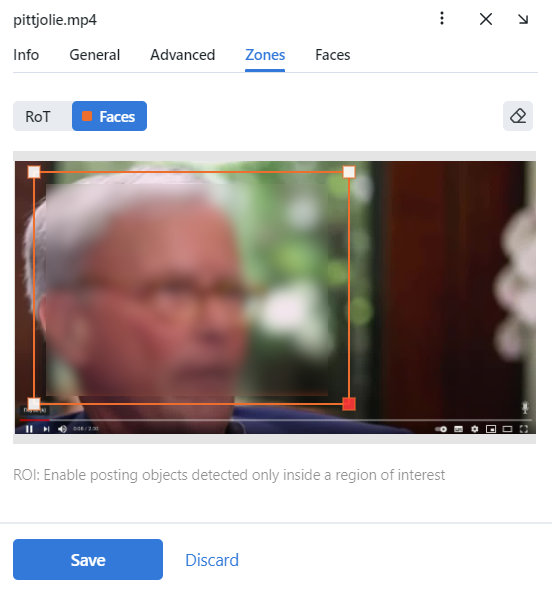

Specify the region of tracking within the camera field and region of interest (Zones). Click Save.

On the Faces tab, specify settings for face detection.

Size: Minimum object size in pixels to post and maximum object size in pixels to post.

Quality: The minimum quality of the object image for detection. The allowed range is from 0 to 1. The recommended reference value is 0.5, which corresponds to object images of satisfying quality. Do not change the default value without consulting with our technical experts (support@ntechlab.com).

JPEG quality: Full frame compression quality.

Full frame in PNG: Send full frames in PNG and not in JPEG as set by default. Do not enable this parameter without consulting with our technical experts (support@ntechlab.com) as it can affect the entire system functioning.

Overlap threshold: The percentage of overlap of bboxes between two serial frames so that these bboxes are considered as one track. The range of values is from 0 to 1. Do not change the default value without consulting with our technical experts (support@ntechlab.com).

Track maximum duration: The maximum approximate number of frames in a track, after which the track is forcefully completed. Enable it to forcefully terminate endless tracks, for example, tracks with objects from advertising media.

Forced termination interval: Terminate the track if no new object has been detected within the specified time (in seconds).

Send track history: Send array of bbox coordinates along with the event. May be applicable for external integrations to map the path of an object.

Crop full frame: Select to crop the full frame to the size of the ROT area before sending it for recognition. The size of the full frame will be equal to the size of the ROT area.

Offline mode (overall_only): By default, the system uses the offline mode to process the video, i.e., it posts one snapshot of the best quality per track to save disk space. Disable it to receive more object snapshots if needed. If the offline mode is on, the parameters of the real-time mode are off.

Real-time mode parameters:

Note

These parameters are non-functional if the offline mode is on.

Interval: Time interval in seconds (integer or decimal) within which the object tracker picks up the best snapshot in the real-time mode.

Post first object immediately: Select to post the first object from a track immediately after it passes through the quality, size, and ROI filters, without waiting for the first

realtime_post_intervalto complete in real-time mode. Deselect the option to post the first object after the firstrealtime_post_intervalcompletes.Post track first frame: At the end of the track, the first frame of the track will be additionally sent complimentary to the overall frame of the track. May be applicable for external integrations.

Post in every interval: Select to post the best snapshot within each time interval (

realtime_post_interval) in real-time mode. Deselect the option to post the best snapshot only if its quality has improved comparing to the posted snapshot.Post track last frame: At the end of the track, the last frame of the track will be additionally sent complimentary to the overall frame of the track. May be applicable for external integrations.

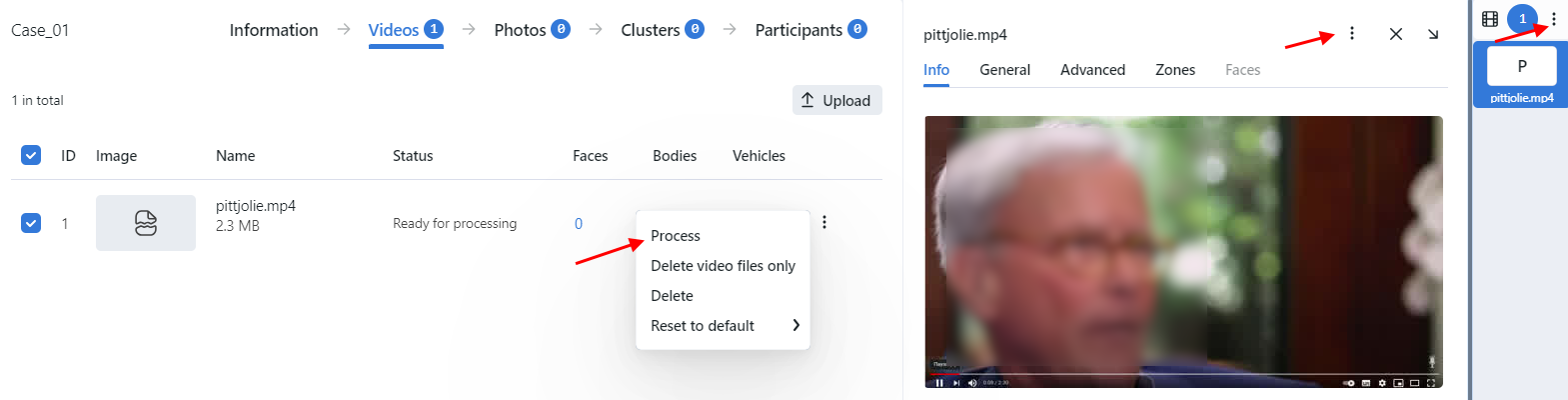

On the list of video uploads, click three dots → Process to start object identification.

The processing results will be available on the Clusters tab and on the Cluster events tab of the participant.

Upload and Process Photo File

To upload and process a CSI photo file, do the following:

Navigate to Case Files.

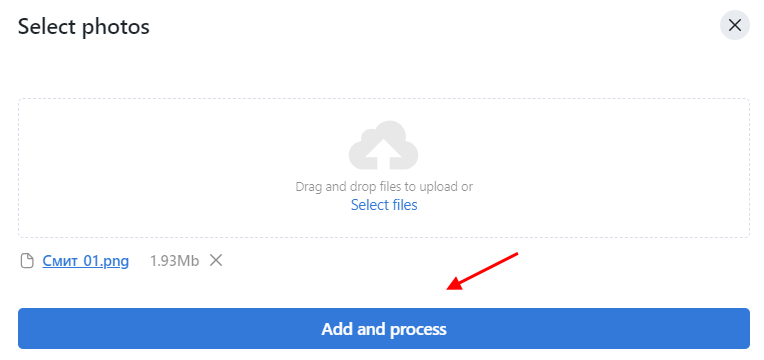

Click + Add photo.

Select photos to upload.

Click Add and process. As a result, the photo file will be added to the list of photo uploads and processed.

To restart object identification on the photo file, click three dots → Process.

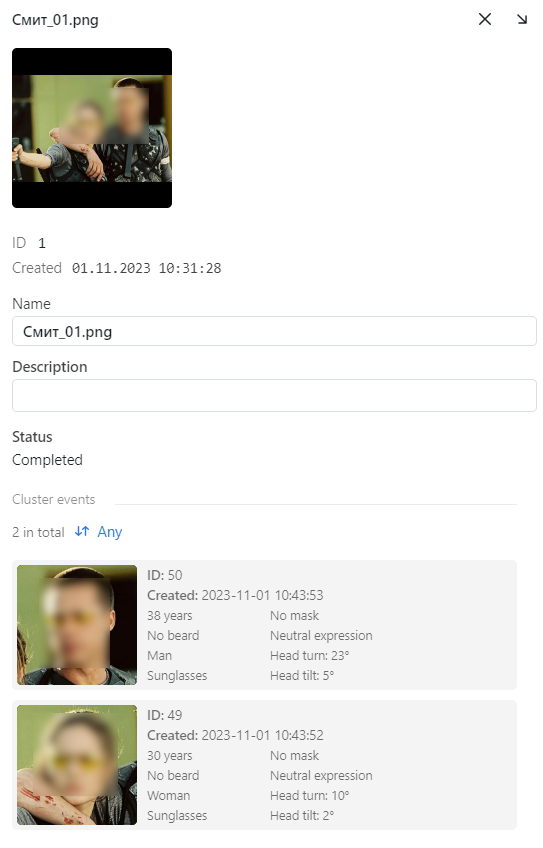

Click the name of image in a list to see cluster events and modify a name of the photo file or add a comment, if needed.

Detected People Analysis

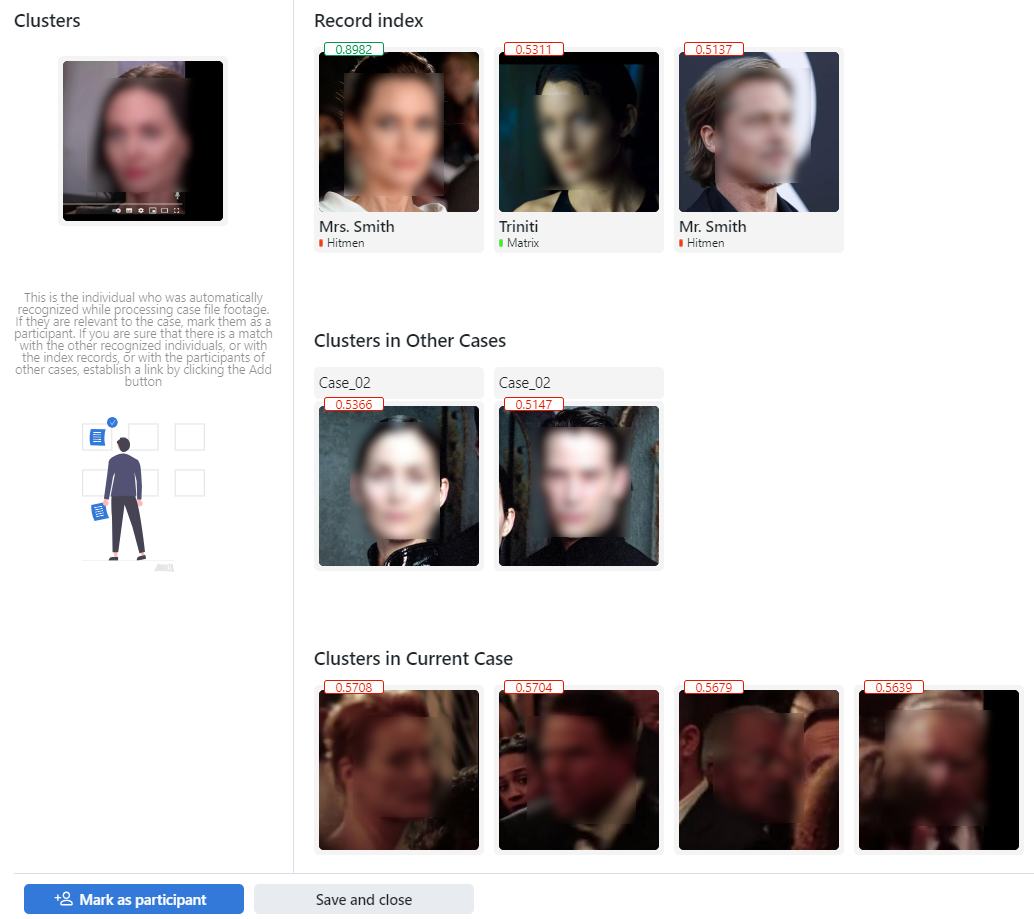

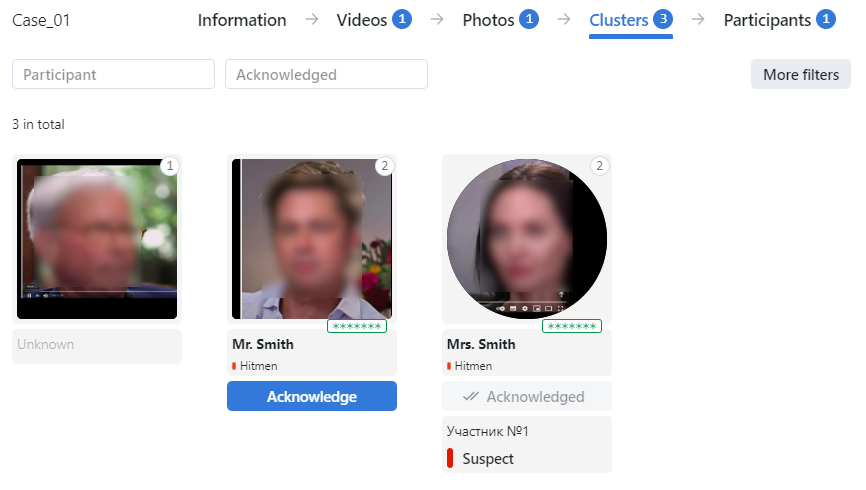

The clusters of faces, once detected in the video and photo files, are shown on the Clusters tab. Here you need to parse them subject to the role a person plays in the incident and establish links to relevant global records, other case files, participants of the same case, and other clusters.

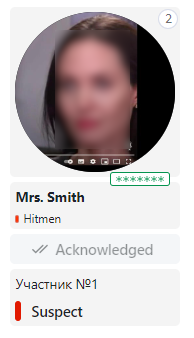

If a detected object has a match in the card index, a case cluster thumbnail contains the following information:

normalized object image

record name

the similarity between matched objects

watch list(s)

Acknowledge button

Click the Acknowledge button to acknowledge case cluster.

To analyse detected people, do the following:

Click on a face on the list.

Tip

With the large number of clusters, use filters. Click the More filters button in the upper-right corner to display them.

Note

Some filters from the list below may be hidden, depending on which recognition features are enabled.

Participant: display only case clusters/only participants or any.

Acknowledged: display only unacknowledged clusters/only acknowledged clusters or any.

Matches: display case clusters only with/without matching the record, or all events.

Watch lists: display case clusters only for a selected watch list.

Date and time: display only case clusters formed within a certain period.

ID: display a case cluster with a given ID.

Specific filters for face clusters

Age: display case clusters with people of a given age.

Beard: filter case clusters by the fact of having a beard.

Emotions: display case clusters with given emotions.

Gender: display case clusters with people of a given gender.

Glasses: filter case clusters by the fact of wearing glasses.

Face mask: filter case clusters by the fact of wearing a face mask.

Link the face to a matching record in the global record index and matching participants of other case files. Do so by clicking the Add button that appears when you hover over the photo. You can also link the face to participants of the same case and other clusters.

Click the face in section Clusters to see detected faces in full screen and split some of them from a cluster, if needed.

Click Mark as participant.

Specify the participant name and type, and add a comment if necessary. This will create a new case participant record that you will be able to view on the Participants tab.

A participant can be one of the following types:

victim

witness

suspect

Click Save and close.

As a result, the information will be added to a case cluster thumbnail and the face image will have a round frame. The number of cluster events is shown by the number in the upper right corner of the case cluster thumbnail. Click the number to view full screen of the image in the new window. You can split the face from a cluster, if needed.

You can reopen the connections wizard on the Clusters tab by clicking on the participant’s face again.

To access the participant record, navigate to the Participants tab.

Case Participant Records

The Participants tab provides access to all participant data collected so far.

To view a case participant record, click the relevant face on the list.

On the Info tab you can modify participant information, if needed.

On the Cluster events tab you can view detected face images of the participant and other face images of the case cluster.

On the Connections tab you can view connections of a participant with persons from other clusters.

FindFace CIBR allows you to search detected objects from the case participant records. To navigate from the case participant record to the search tab, click three dots → Search.

If during a case investigation it is necessary to reset the participant record, click three dots → Reset participant.

With the large number of participants, use filters. Click the More filters button in the upper-right corner to display them.

Name contains: display participants by name.

Role: display participants for a selected role (Any/Victim/Witness/Suspect).

Date and time: display participants by case date within a certain period.

Case File Archive

It’s possible to label a case file as archived to indicate that the case is closed, or for another reason.

To archive/unarchive case files, do the following:

On the Case Files tab, select one or several case files.

Click Archive selected to archive the case files. Click Delete selected to delete them.

If a case has been archived, you cannot change it anymore. To add photos and video footage or modify participants, open the case again by checking it and clicking Open selected.

You can filter the case file list by archived status: All, Opened, Archived or use additional filter by clicking More filters:

Unacknowledged status: display cases by acknowledged status.

Date and time: display cases by date within a certain period.

ID: display a case with a given ID.